Twitter Study Finds Coordinated Pattern in Posts About Purported Voter Fraud

Researchers have identified a network of Twitter accounts that’s pushing misleading narratives about U.S. election integrity

(Bloomberg) -- With the U.S. midterm elections days away, researchers have identified what they called a coordinated network of Twitter accounts that’s pushing false and misleading narratives about election integrity with hashtags like #VoterFraud.

They found a core of 200 accounts that tweeted or were mentioned in tweets more than 140 million times over the last year, according to a research report published Saturday.

The findings don’t necessarily reflect a reprise of the Russian influence efforts in the 2016 election, nor is the tweeting clearly driven by automated bots, researchers say. But the network of accounts, which sounds off at relatively regular intervals -- even at times when there’s nothing about the topic in the news -- has helped create an echo chamber to justify state-level ballot restrictions despite little evidence of actual voter fraud.

“There is a tragically ironic relationship between the perception that large groups of people are voting illegally,” while a small group of Twitter accounts is “wielding massive influence to spread disinformation, affecting the public’s understanding of voter fraud,” says the report. It was prepared by a volunteer group of researchers and technologists led by Guardians.ai, a New York startup that’s focused on protecting pro-democracy organizations from information warfare and cyber-attack.

Researchers couldn’t identify who was behind the coordination -- and they said the patterns they found suggest that online influence operations have evolved in subtle ways that avoid detection.

“We set out to provide a new way for the public to understand how influence works,” the report says. “Today a small group of people can wield increasingly more powerful AI, big data, and psychological targeting to influence society, and we feel that it’s a fundamental right to know who’s influencing you, how it’s happening and why.”

A spokesman for Twitter Inc. said in a statement that the research “helps us and the public understand how groups organize around topics and movements.”

“While we prohibit coordinated malicious behavior, and enforce accordingly, we’ve also seen real people who share the same views organize using Twitter,” the company’s statement said. “This report effectively captures what often happens when hot button issues gain attention and traction in active groups.”

In mid-September, researchers at Guardians.ai began digging into the hashtag #VoterFraud from the sparsely furnished Brooklyn apartment that serves as their headquarters.

Brett Horvath, one of the company’s three founders, first got into online organizing more than decade ago, helping launch an app that allowed people in Washington state and Arizona to register to vote from their Facebook profiles. His cofounders, Zachary Verdin and Alicia Serrani worked together at New Hive, a multimedia publishing platform for artists. Partners at San Diego Supercomputer Center’s Data Science Hub (part of the University of California at San Diego) and Zignal Labs technology was used in the research.

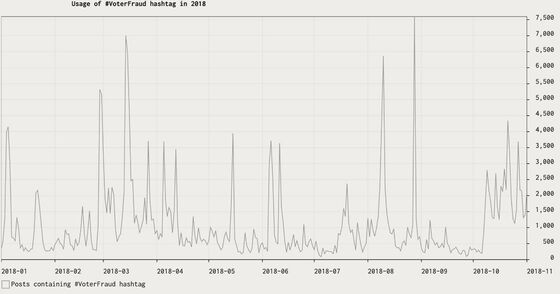

Horvath and Serrani discovered spikes in the hashtag on two days in August, when mentions jumped from hundreds a day to more than 6,500. Intrigued, they looked back 12 months, and then three years, and found the pattern of upticks repeating so regularly that the graph looked like a heartbeat.

They didn’t find much news to explain the spikes, but then Verdin looked at the accounts using the hashtag and noticed the same handles again and again. He also found similar spikes -- and a core of the same accounts -- mentioning related hashtags like #VoterID and #ElectionFraud.

‘Divisive Narratives’

“It’s not just like, oh there’s these kind of suspicious accounts that tweet about normal stuff, and just happen to all tweet about voter fraud on the exact same day,” Horvath said. “They’re accounts that operate at the same time, in the same ways, and are also involved in influencing other divisive narratives.”

Accounts within the network have promoted the idea that billionaire George Soros is funding the migrant caravan “invasion”; issued warnings about buses of undocumented immigrants being paid to vote; and fueled “false flag” theories that Democrats orchestrated last month’s rash of package bombs to make Republican President Donald Trump look bad.

In 2016, voter fraud became one of the newly elected Trump’s first obsessions, when he claimed without evidence that millions of illegal votes gave Democrat Hillary Clinton the edge in the popular-vote count.

The liberal-leaning Brennan Center for Justice in New York calls voter fraud extraordinarily rare. The conservative Heritage Foundation, which maintains a database of election-fraud cases meant to demonstrate the dangers, lists 1,165 instances and 1,011 criminal convictions over about 40 years. (Heritage points out that these cases involve far more than 1,165 ballots, and says its list is not meant to be comprehensive.)

States’ Restrictions

The specter of undocumented immigrants and phantom dead people casting ballots has driven legislation to tighten voting requirements in many states. Over the last two years, Arkansas and North Dakota passed voter ID bills while Georgia, Iowa, Indiana, New Hampshire and North Carolina enacted new restrictions, according to the Brennan Center.

Once the researchers noticed the heartbeat pattern and identified the accounts that pushed the spikes, they were left with questions. The accounts don’t look like fakes; many included photos of real people in their bio sections. Some have been around for many years, tweeting without much attention or many followers, though not necessarily always about politics.

Most had a dramatic surge, from little activity to thousands or tens of thousands of mentions in a day, according to the report. One account, @1776hotlips, joined Twitter in April and went from zero to sometimes tens of thousands of mentions a day, beginning on Aug. 20 and continuing until Twitter suspended the account recently.

Real People

The technology and techniques used to identify bot-driven accounts that relay disinformation often rely on measures like the ratio of followers to accounts followed or volume of retweets, Horvath said. Groups of accounts that influence through large, sudden increases in replies and mentions may help evade such tools, he said.

Among these influential accounts, there are real people, tweeting out of deeply held beliefs -- and the report speculates that some of them don’t realize they’re part of a broader influence network. “A bad actor coordinating large numbers of accounts could find this person’s tweets useful, then amplify those tweets through thousands of @mentions and replies,” the researchers wrote.

Linda Suhler is definitely a real person. A retired molecular biologist and grandmother of four in Scottsdale, Arizona, she has some 374,000 followers. She has a lot of time on her hands to tweet, but she isn’t part of any organized Twitter rooms or retweet groups, and has never bought a follower, she said. She tweets on issues she cares about, voter fraud included.

“If you can figure out why so many followed an ordinary person in Scottsdale, Arizona, you tell me,” said Suhler, who laughed when called by a reporter. Unprompted, she joked: “I’m actually a bot.”

So is this really manipulation, or just the ultimate network effect of social media, bringing like-minded people together in an online community? Horvath said the patterns in the data show clear evidence of a coordinated campaign -- one that’s ramping up and will continue, even after the midterms.

“Why this matters in an ongoing way, a hypothesis, is that this network will be used to frame and influence the conversation about what happened in the election, whether close races, recounts, who voted illegally, etc.,” he said. “And that has implications for the public and lawmakers as they’re thinking about voter ID laws, voter suppression tactics and the Census.”

To contact the reporter on this story: Dune Lawrence in New York at dlawrence6@bloomberg.net

To contact the editors responsible for this story: John Voskuhl at jvoskuhl@bloomberg.net, Flynn McRoberts

©2018 Bloomberg L.P.