Someone Is Impersonating Me, and Twitter Doesn’t Care

(Bloomberg Opinion) -- This is a personal story.

It’s a tale not only of being impersonated on Twitter, but of the company’s seeming apathy in dealing with the problem of fake accounts, despite claims of caring about “information quality.”

Early Wednesday afternoon, Jon Russell of the technology news outlet TechCrunch sent me the link to a Twitter account. Russell has written about a rise in fake accounts and bot farms in Asia. The profile was an exact copy of my own, which starts with a reference to my job — Technology Columnist: Bloomberg Opinion Asia — and includes other details specific to me (Ironman athlete, vegetarian, novice coder).

A quick Google search found another account had done the same. Both had been created in October 2018, were purportedly based in Seoul, and had Korean-looking names. Each was following around 1,000 accounts and had around 200 followers of its own. At this point two things occurred to me: I’d not have come across this if it hadn’t been flagged to me; and Twitter’s search function is so poor that I had to use Google.

So I sent a direct message to one of Twitter Inc.’s Asia Pacific PR staff. The response was sympathetic, but I was advised to use Twitter’s reporting tool.

I reported each account separately for impersonation, and cross-referenced the other account in each report. An automated confirmation email was sent to me immediately with a case number.

The first response came 17 minutes later:

We’ve investigated the reported account and have determined that it is not in violation of Twitter’s impersonation policy.

How strange, I thought. The account, while not using my name, was not only copying very specific details but making a false claim about the account holder’s work and employer. I wrote back (to whom, I don’t know), stating my displeasure and saying I’d escalate if necessary.

I was still in contact with the PR department and gave the staffer the case numbers.

Soon after:

Thanks for bringing this to our attention. In response to your complaint, we’ve contacted the user and are requiring them to review their account.

I don’t know whether it was the PR department’s intervention that got this reviewed, or the person at the other end of my complaint. But I will note that my curt reply to the case received another automated response: “You tried to update a case that was closed.”

By the next morning, one account had changed its profile, revealing what I had earlier suspected: that this was a follower farm. Now it read: 500 Retweets = 500 followers! LIKE & RT if you follow back!! Turn my notifications ON Follow me #follow #instafollow #instantfollowback.

The other account was unchanged; my online doppelganger remained. But even the amendment to the first account wasn’t good enough. An account created clearly to deceive shouldn’t get a mulligan, it should be blocked.

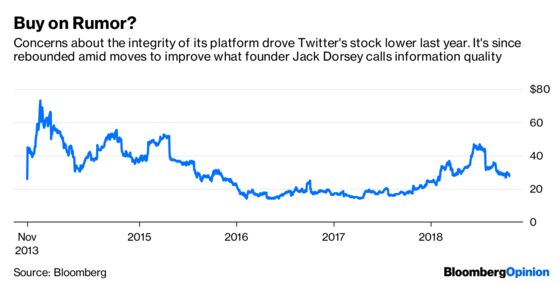

In his letter to shareholders in February, Twitter CEO Jack Dorsey introduced the term “information quality” to refer to efforts in “addressing all malicious activity on the service, and that’s inclusive of spam, malicious automations, and also fake accounts.”

I get that caution must be taken not to shut down parody, tribute or critique accounts. Yet there’s no way the company could look at these two examples and conclude there was any intention other than to deceive.

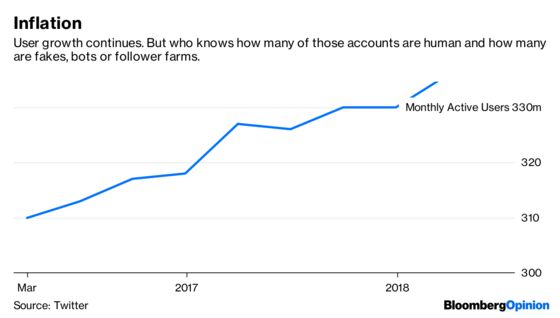

It’s probable that the humans at the other end of complaints like mine are overworked and make errors of judgment. Yet Twitter’s policies and execution betray the truth: At the institutional level, Twitter doesn’t really seem to care about fake accounts.

If the company were concerned, both accounts would have been recognized as deceptive in nature. Staff would have seen that most of the followers and followees of the accounts I flagged were fake (I note that many made reference to bitcoin or cryptocurrency in their profiles).

And Twitter certainly would have shut down accounts that were making demonstrably false claims about their job and employer, especially after the accounts had been flagged to company executives.

I outlined the case to another Twitter spokesperson, who declined to be identified by name and said the company doesn’t “disclose information about Twitter accounts for privacy and security reasons.” No more specific comment was given. Declining to be named is part of the problem, in my view.

If Dorsey and his team are concerned about the integrity of the platform they built, they should put adequate systems in place to protect users.

On Thursday, as Twitter released third-quarter earnings, Dorsey wrote in his quarterly letter to shareholders that operating expenses would continue to increase as it seeks to reduce spam accounts and offensive content by adding headcount. But part of the company’s problem is that it doesn’t have the same resources as Facebook Inc. Twitter had third-quarter adjusted net profit of just $106 million — an improvement on the previous year, but a rounding error in the context of Facebook’s expected $5.6 billion profit in the same period.

When it comes to vetting content, there’s little effective substitute for human eyeballs. In the 12 months through June, Facebook added almost 50 percent more staff as it upped efforts to counter the rise of fake accounts and misleading or false information. Twitter is unable to do that as readily, so has to lean more on artificial intelligence. That technology is improving, to be sure, but has a long way to go.

Of course Twitter needs data, and users, and engagement. But above all it needs to care enough to enable users to trust its service.

—With assistance from Bloomberg Opinion’s Alex Webb

To contact the editor responsible for this story: Paul Sillitoe at psillitoe@bloomberg.net

This column does not necessarily reflect the opinion of the editorial board or Bloomberg LP and its owners.

Tim Culpan is a Bloomberg Opinion columnist covering technology. He previously covered technology for Bloomberg News.

©2018 Bloomberg L.P.