Why Economics Has Trouble With the Big Problems

Why Economics Has Trouble With the Big Problems

(Bloomberg Opinion) -- Physics is a very powerful and exact science. Physicists can predict how electricity will flow through the microscopic circuits in your computer, they can land a spaceship on the moon, they can even pick up a single atom. But when it comes to the origin of the universe, even the smartest physicists can’t give us definite answers. How did its early expansion work? Is there only one universe, or are there many? Because physicists can’t create new universes in a lab, they have to attack these questions using much less definite methods — making cosmic observations and applying theories from the lab.

This example illustrates a trade-off that any science is forced to make. Small, limited questions can be answered with confidence, but bigger questions are subject to much more uncertainty.

Economics is no different. Over the last three decades, microeconomists have come up with a series of techniques that can give fairly reliable answers to some empirical questions. These methods, collectively known as the credibility revolution, rely on two basic tricks.

The first is to find some random event or random cutoff in the world, and then look at what happens before and after the event, or on either side of the cutoff. For example, you could look at the effects of a sudden wave of war refugees to assess the effects of low-skilled immigration on native-born workers. This is known as a natural experiment or quasi-experiment.

The second trick is a randomized controlled trial, or RCT. This is when economists set up some sort of pilot program or large-scale experiment, to test whether some policy is effective in the real world.

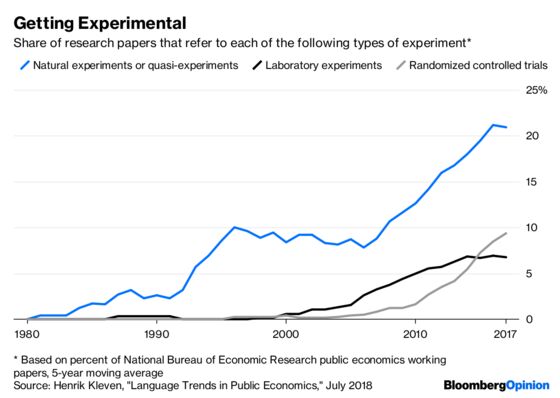

Together, these methods are taking over many fields of microeconomics. For example, economist Henrik Kleven has used text mining to measure the use of these techniques in the field of public economics, which deals with taxes, government spending and regulation:

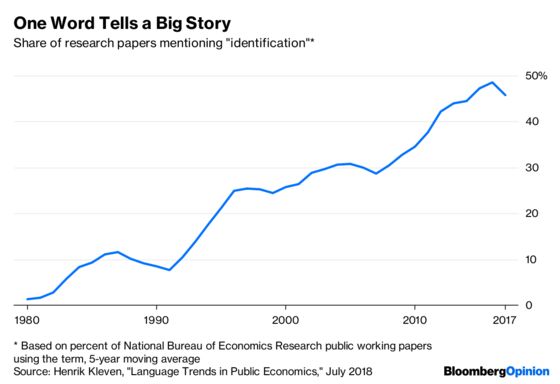

These new methods work well because they’re good at separating correlation from causation — something economists call identification. For example, there are lots of immigrants in New York, and wages there are generally high, but it was probably the latter that caused the former — people move to the city from around the world because they can earn a lot. However, if a big wave of war refugees suddenly shows up on your doorstep, you can be a lot more confident that changes in wages and employment levels were actually caused by the immigration. Studies like these are the reason economists now believe that low-skilled immigration has at most only a small negative effect on wages.

More and more economics papers are focusing on identifying causation:

But as with physics, greater certainty comes at a price. The questions that economists can answer with the greatest confidence tend to be relatively narrow, like the effect of minimum wage in a single city, or the effect of education funding on labor-market outcomes. Those are important questions, to be sure. But there are a lot of really big or complex questions that can’t be attacked with RCTs or natural experiments.

This is the point economist Christopher Ruhm made in a recent essay entitled “Shackling the Identification Police?” Ruhm listed a number of interesting and important questions that economists probably can’t answer using the most reliable methods. He cited the effects of free trade on wages, the economic costs of global warming, and others.

But his list certainly wasn’t an exhaustive one. Many of the biggest issues in economics — the causes and consequences of recessions, the effectiveness of fiscal and monetary policy, the gains from trade, the effects of urbanization and the impact of federal taxes — can’t be effectively answered, or can only be partially addressed, by the new super-reliable techniques.

So what do economists do? Ignoring the big, difficult questions seems like a mistake. It’s not as if economists are in the dark on these issues — they can marshal a wide array of circumstantial evidence, theory and small-scale results to make some headway. Ruhm is right to call for academic journals to publish studies that use a variety of methodologies to attack big questions, even if they don’t provide definitive answers.

But for the public, and for policy makers, this presents a harder problem. Non-economists can’t easily tell the difference between a paper that provides rock-solid evidence and one whose answers are merely suggestive. There’s a danger that as the reliability of econ studies that use natural experiments and RCTs increases, studies with much weaker methods — for example, simply correlating corporate tax rates with wage growth, and concluding that tax cuts will boost wages — will be able to gain a credibility they don’t deserve.

So it’s up to economics pundits, and the news media, to inform people of the difference. Economists should attack the big, hard questions, but the public needs to know when they can really believe a study and when they should be skeptical of its results. Economics journals should publish papers with weak identification, but the media should remind the public that those papers must be taken with large helping of skepticism.

To contact the editor responsible for this story: James Greiff at jgreiff@bloomberg.net

This column does not necessarily reflect the opinion of the editorial board or Bloomberg LP and its owners.

Noah Smith is a Bloomberg Opinion columnist. He was an assistant professor of finance at Stony Brook University, and he blogs at Noahpinion.

©2018 Bloomberg L.P.